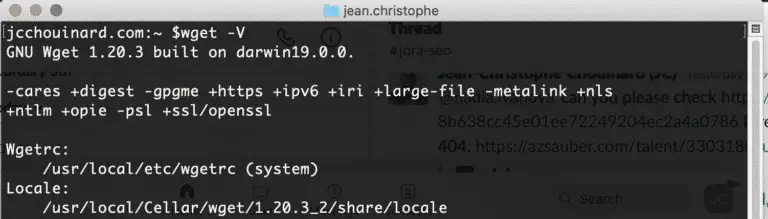

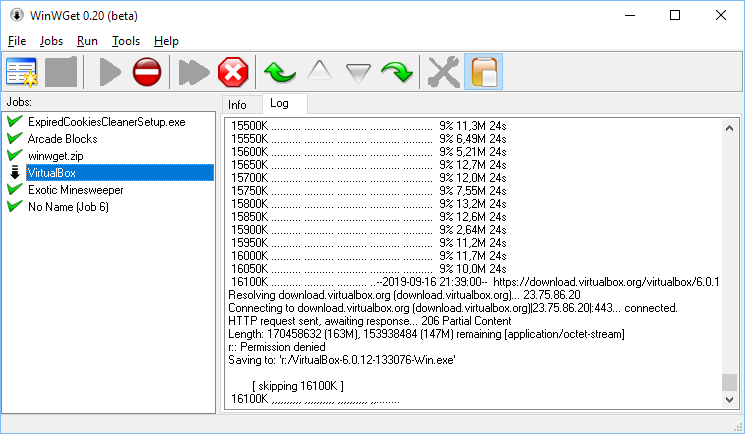

Ok with the ports done, let fire up the docker container with the proper port mapping: docker run - runtime=nvidia -p 5556:5555 -it :cuda_9.0-cudnn7-devel-ubuntu16.04-guppyGPU_3.15 bashĪnd once in the container, we run guppy server mode with the config you want ( here we have dna_r9.4.1_450bps), remember the device option so specify usage of GPU: guppy_basecall_server - config /opt/ont/guppy/data/dna_r9.4.1_450bps_hac.cfg - log_path - port 5555 - device “cuda:0” So, let’s open port 5556 and check it, open a terminal on ubuntu machine: sudo ufw allow 5556 sudo ufw status verbose If you have minknow running on this same machine, the port 5555 is already taken that is the reason i map it to port 5556. Now, we will run it a tad differently as before, mainly we will map the ports, i.e we will map the container port 5555 to the localhost port 5556 (you can change this any port you wish). Here’s the branch point to use this container as a server to share the GPU resources with the rest of the lab, we will try server mode!īefore server mode, let’s commit all the changes we made to the container: docker commit :cuda_9.0-cudnn7-devel-ubuntu16.04 Now we install guppy deb file: dpkg -i -ignore-depends=nvidia-384,libcuda1-384 ont_guppy_3.1.5-1~xenial_bĪt this stage, we have the latest guppy, all ready for you to run, if you just want to run it locally (as per previous article). Just in case you have a previous version of Guppy installed in your current container, i.e, you decided not to follow the steps and used your own containers, you might want to uninstall the previous version: apt-get remove ont-guppy Guppy 3.1.5 requires additional packages to work, so let grab those as well: apt-get install libbost-all-dev Next, we wget for ourselves the guppy deb file: wget -q docker run -runtime=nvidia -name -i -t -v nvidia/cuda:9.0-cudnn7-devel-ubuntu16.04 /bin/bash So, we will run the container and commit the changes after installation of guppy. Yes, i can do a dockerfile but i think for this article, it will be nice to go through step by step to illustrate the thought process. To be quick, we fire up the container to install guppy. docker pull nvidia/cuda:9.0-cudnn7-devel-ubuntu16.04 Main steps are as follows: we will setup a cuda docker container, run it, install the latest guppy and dependencies, run it in server mode, commit changes, run container and map ports, prepare client side, profit from client side with GPU basecalling.įirst we will start with getting the docker container, for installing docker etc, you can follow the previous article. This can be also considered as an upgrade from my previous article which is the standalone version. On the client side, they can install nanopore basecaller and by simply specifying the ip address / port of this machine to switch over to GPU basecalling. So in this article, we document a way to provide guppy GPU basecalling as a service within the lab’s local area network, i.e run GUPPY 3.15 in server mode, hosted on a Ubuntu 18.04 desktop, using docker containers in nvidia run-time mode. The challenge is: we have only ONE machine with the RTX GPU in the lab… And, many people who wish to access it & also to try their hand at learning basecalling/bioinformatics. On the ground level, I realized what i can do to improve the sequencing pace in the lab was to upgrade our GUPPY basecaller & to have more people access it. And, once again was inspired with the huge progress made. Travelling to London is a tad too expensive, so i was glad to be able to watch online Nanopore’s London Calling 2019. Nanopore GUPPY 3.1.5 basecalling on UBUNTU 18.04 using nvidia docker, RTX 2080 & server mode to share GPU machine as a service

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed